Clinical Outcome Assessment (COA) Qualification Program: Frequently Asked Questions

Below are CDER’s COA Qualification Program’s frequently asked questions (FAQs). We also recommend reviewing the general COA FAQ web page.

General CDER COA Qualification Program Information

- Is DDT COA qualification a requirement?

- How do I get started with the CDER COA Qualification Program?

- How does CDER prioritize what submissions to accept into the CDER COA Qualification Program?

- Who participates in a COA Qualification Review Team (QRT)?

- How many development projects are currently in the CDER COA Qualification Program?

COA Qualification Considerations and Requirements

- Are only newly developed COA instruments eligible for qualification?

- A COA has been used to support claims in labeling - is it automatically considered qualified?

- Must I make my qualified COA free of charge for public use?

COA Development and Qualification Process

- What process should I follow when developing a COA?

- What is the standard of evidence for COA qualification?

Other COA Qualification Programs

GENERAL COA QUALIFICATION PROGRAM INFORMATION:

Is DDT COA qualification a requirement?

No. Qualification is not required for a COA to be successfully used in clinical trials and drug development to support regulatory decision making. Please be advised that formal regulatory qualification is a multi-year process that requires a high degree of commitment.

How do I get started with the COA Qualification Program?

We highly encourage you to watch the COA Qualification Program orientation session to learn valuable information related to the qualification process requirements. You may also download the slide deck. As discussed above, DDT COA qualification is not a requirement.

How does CDER prioritize what submissions to accept into the CDER COA Qualification Program?

CDER is committed to strategic growth of the CDER COA Qualification Program. With growing interest in the program and limited resources, we developed a careful framework for prioritizing or deferring acceptance into the program. In general, the public health benefit and scientific merit of the proposed submission are weighed, taking into consideration available CDER staff resources. Specifically, we consider the following:

- Does the proposed COA fill a critical measurement gap (i.e., is drug development stalled or slowed)?

- Does the proposed COA represent significant improvement over currently available, acceptable COAs?

- Is the COA patient centric (i.e., measures something of relevance and importance to patients in their daily lives that is not being evaluated in that clinical context due to lack of acceptable assessments)?

- Are other efforts already underway in the proposed disease area either under the CDER COA Qualification Program or another mechanism?

- If the proposed tool is already deemed acceptable, are there other mechanisms for communicating its acceptability without the need to go through a formal qualification process (e.g. Indication-specific guidance)?

- Is the requestor willing to develop a new tool or modify an existing tool to make improvements as needed based on research findings?

Who participates in a COA Qualification Review Team (QRT)?

The COA QRT comprises representatives from CDER’s Division of Clinical Outcome Assessment (DCOA), the appropriate therapeutic area review division(s), the Office of Biostatistics, and others as appropriate. Representatives from other FDA Centers may also participate when appropriate.

How many development projects are currently in the CDER COA Qualification Program?

Please visit Qualified COAs to view a list of qualified COAs and COA Program Submissions for a list of COAs in the qualification program for which qualification decisions have yet to be made. These lists also contain information about these COAs’ submissions.

COA QUALIFICATION CONSIDERATIONS AND REQUIREMENTS:

Are only newly developed COA instruments eligible for qualification?

No. Both newly developed and existing measures will be considered for the CDER COA Qualification Program.

A COA has been used to support claims in drug labeling- is it automatically considered qualified by CDER?

No. COAs are only qualified through the Drug Development Tool (DDT) qualification process. We encourage you to discuss with the appropriate FDA review division as early as possible on the use of COAs to support a clinical trial endpoint in an individual drug development program.

Am I required to use ONLY qualified COAs for clinical trials?

No. While we believe there are benefits to using a qualified COA, you are not required to use a qualified COA to support a clinical trial endpoint. We encourage you to discuss with the appropriate FDA review division as early as possible on use of COAs in an individual drug development program.

Must I make my qualified COA free of charge for public use?

No. While qualified COAs must be made publicly available, this does not prevent you from charging a reasonable fee for its use. Qualification does not displace any intellectual property, copyrights, or ownership rights.

COA DEVELOPMENT AND QUALIFICATION PROCESS

What process should I follow when selecting or developing a COA?

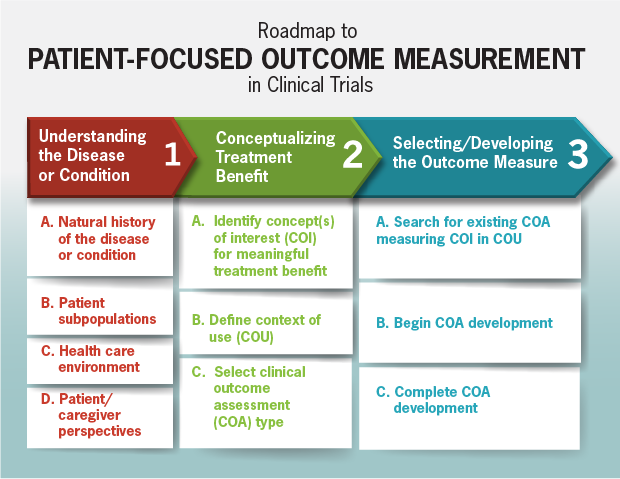

Roadmap to Patient-Focused Outcome Measurement in Clinical Trials

View a larger, more detailed version of this image (PDF - 463KB).

[text accessible version of this image]

The Roadmap (above) outlines the process for selecting or developing a COA. Column one describes information to consider when identifying the targeted context of use and concept of interest for clinical trial measurement (column two). This information can then be used to identify the proper type of outcome assessment to develop (column three).

The type of COA to select or develop (PRO-, ClinRO-, ObsRO-, or PerfO-measure) depends on the target concept of interest and context of use (e.g., patient population). For example, if pain intensity is the concept of interest in a patient population that can respond themselves, a PRO is most appropriate. If clinical judgment is required to interpret an observation, a ClinRO is chosen. If the concept of interest can only be adequately captured by observation in daily life (outside of a healthcare setting), and the patient cannot report for him or herself, then an ObsRO is chosen. When it would be useful to observe an actual demonstration of a defined task(s) demonstrating functional performance, a PerfO may be appropriate.

What is the standard of evidence for COA qualification?

The measurement principles of content validity, reliability, construct validity, and ability to detect change apply to all COA types. We often refer COA developers to the following resources:

- FDA’s Patient Reported Outcome (PRO) COA guidance- while developed for PRO measures, many recommendations also apply to the development of clinician-reported outcome (ClinRO), observer-reported outcome (ObsRO) and performance outcome (PerfO) measures.

- ISPOR Task Force publications- we often refer developers to this resource on content validity.

OTHER COA QUALIFICATION PROGRAMS

What is the relationship between CDER’s and CDRH’s qualification programs?

CDRH has its own separate qualification process for Medical Device Development Tools, including COAs. Requestors should consider whether their COA has applicability in medical device studies and, when appropriate, submit for qualification by CDRH. To learn more, please review the Medical Device Development Tools Draft Guidance for Industry, Tool Developers and FDA Staff.